Google closed their original A/B Split testing platform Google Optimizer on August 2012 and replaced it with Google Experiments. Was it a good thing?

The Good

Experiments is integrated with Google Analytics, which increases it’s functionality from the Optimizer days.

This makes it easier since we now only have 1 place to log in to. On the flip side of that, you now have to know how to create Goals since Experiments use Goals as their conversion points.

The Bad

You have to learn how to setup Analytic Goals. This may not be a bad thing since knowing how to do this will increase your knowledge which will allow you to improve your sites overall. For some reason viewing Goals and where you set them up are in 2 completely different places. To View them you go to the Analytics Conversions menu, and click on Goals. To add Goals (come back in a few days view this page on Adding Google Analytics Goals or this video . It needs more explanation than this article was intended for.

The other item which (as a coder) I consider bad is that I have to create 2 separate pages with different URL’s. I have not tried to just use a URL variable like

http://www.mysite.com/primary-url?test=1

Update coming shortly.

I also noticed that while the test is running that stats are different on the Main view, the Ecommerce view and the Page Metric (located in a drop down next to Advanced Segments). This can be a nuisance when trying to get accurate numbers for reporting purposes.

The Ugly

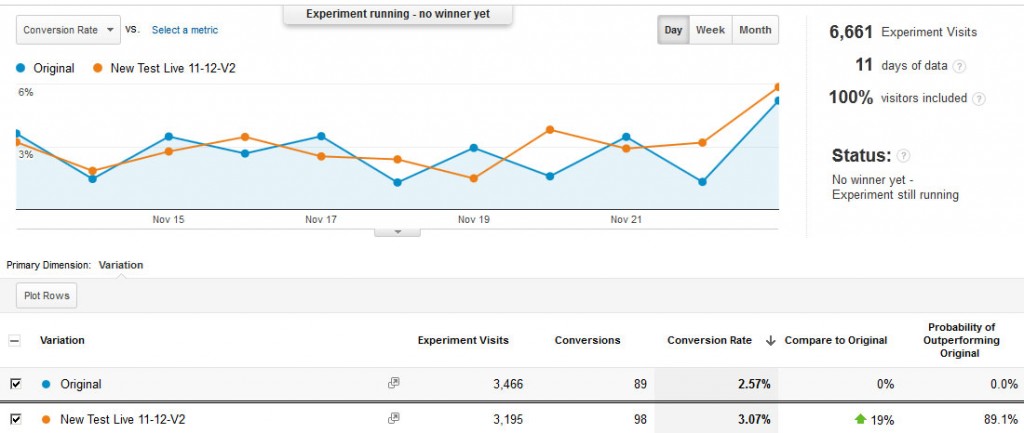

Google Experiments don’t appear to be a true 50/50 split. Looking at the image below we can see that the test that is winning is still lagging behind with it’s page views. They are using a method called the Multi Armed Bandit (MAB for short), which as they state:

Once per day, we take a fresh look at your experiment to see how each of the variations has performed, and we adjust the fraction of traffic that each variation will receive going forward. A variation that appears to be doing well gets more traffic, and a variation that is clearly underperforming get less.

Well that would be nice, except what I can’t decipher if they are going off of the conversion rate only or are they also looking at an eCommerce indicator. All testing point to the lack of an eCommerce indicator, and even still does not explain the less than 50/50 traffic I have seen over a dozen tests on different sites. The problem with them not looking at the eCommerce indicator is that I can have a lower conversion rate with a much higher per page value, meaning one version is causing people to buy much more.

The Overview

If you’re looking to do simple testing to see if you can increase your conversions, this is a nice place to start. The price of Free makes it fit any budget. If you’re already doing eCommerce tracking with Analytics this may also be a viable solution for you, and it keeps all your stats in one organized place.

It’s limitations of single A/B testing and no multivariant option, which I can understand because it can get ugly and not offer the increase conversions if you’re not careful, do create some drawbacks for someone who is expecting to do some hardcore testing.